We hear quite a bit about AI security, however does that imply it’s closely featured in analysis?

A brand new examine from Georgetown College’s Rising Expertise Observatory means that, regardless of the noise, AI security analysis occupies however a tiny minority of the trade’s analysis focus.

The researchers analyzed over 260 million scholarly publications and located {that a} mere 2% of AI-related papers printed between 2017 and 2022 instantly addressed matters associated to AI security, ethics, robustness, or governance.

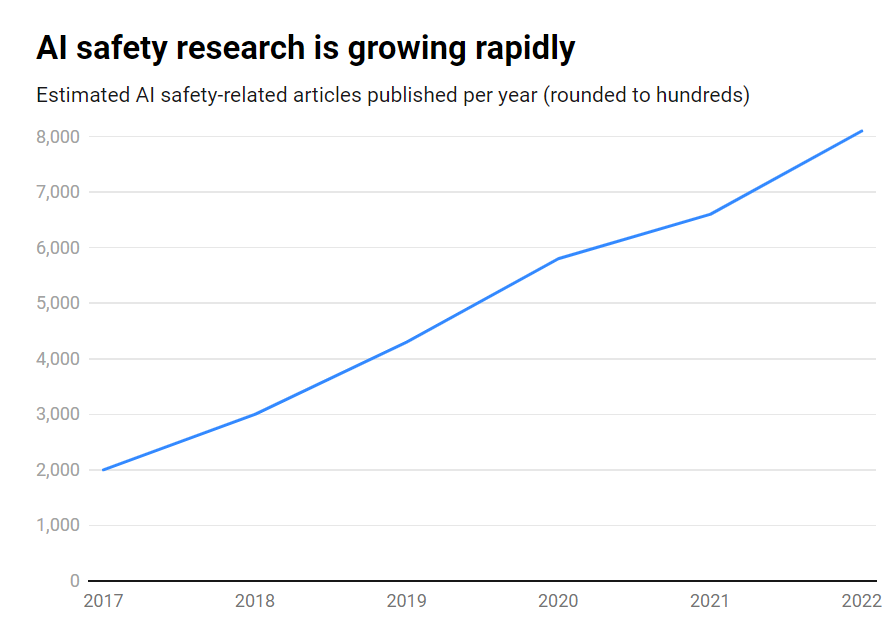

Whereas the variety of AI security publications grew a formidable 315% over that interval, from round 1,800 to over 7,000 per 12 months, security analysis is being vastly outpaced by the explosive development in AI capabilities.

Listed below are the important thing findings:

- Solely 2% of AI analysis from 2017-2022 targeted on AI security

- AI security analysis grew 315% in that interval, however is dwarfed by total AI analysis

- The US leads in AI security analysis, whereas China lags behind i

- Key challenges embody robustness, equity, transparency, and sustaining human management

Many main AI researchers and ethicists have warned of existential dangers if synthetic basic intelligence (AGI) is developed with out enough safeguards and precautions.

Think about an AGI system that is ready to recursively enhance itself, quickly exceeding human intelligence whereas pursuing targets misaligned with our values. It’s a situation that some argue may spiral out of our management.

It’s not one-way visitors, nonetheless. Actually, numerous AI researchers consider AI security is overhyped.

Past that, some even assume the hype has been manufactured to assist Large Tech implement laws and get rid of grassroots and open-source rivals.

Nevertheless, even in the present day’s slender AI programs, educated on previous knowledge, can exhibit biases, produce dangerous content material, violate privateness, and be used maliciously.

So, whereas AI security must look into the long run, it additionally wants to deal with dangers within the right here and now, which is arguably inadequate as deep fakes, bias, and different points proceed to loom massive.

Efficient AI security analysis wants to deal with nearer-term challenges in addition to longer-term speculative dangers.

The US leads AI security analysis

Drilling down into the information, the US is the clear chief in AI security analysis, dwelling to 40% of associated publications in comparison with 12% from China.

Nevertheless, China’s security output lags far behind its total AI analysis – whereas 5% of American AI analysis touched on security, only one% of China’s did.

One may speculate that probing Chinese language analysis is an altogether troublesome job. Plus, China has been proactive about regulation – arguably extra so than the US – so this knowledge may not give the nation’s AI trade a good listening to.

On the establishment degree, Carnegie Mellon College, Google, MIT, and Stanford lead the pack.

However globally, no group produced greater than 2% of the overall safety-related publications, highlighting the necessity for a bigger, extra concerted effort.

Security imbalances

So what may be completed to right this imbalance?

That depends upon whether or not one thinks AI security is a urgent danger on par with nuclear conflict, pandemics, and so on. There isn’t a clear-cut reply to this query, making AI security a extremely speculative subject with little mutual settlement between researchers.

Security analysis and ethics are additionally considerably of a tangential area to machine studying, requiring completely different ability units, educational backgrounds, and so on., which might not be nicely funded.

Closing the AI security hole can even require confronting questions round openness and secrecy in AI improvement.

The most important tech corporations will conduct intensive inner security analysis that has by no means been printed. Because the commercialization of AI heats up, companies have gotten extra protecting of their AI breakthroughs.

OpenAI, for one, was a analysis powerhouse in its early days.

The corporate used to supply in-depth unbiased audits of its merchandise, labeling biases, and dangers – reminiscent of sexist bias in its CLIP undertaking.

Anthropic continues to be actively engaged in public AI security analysis, ceaselessly publishing research on bias and jailbreaking.

DeepMind additionally documented the opportunity of AI fashions establishing ‘emergent goals’ and actively contradicting their directions or changing into adversarial to their creators.

Total, although, security has taken a backseat to progress as Silicon Valley lives by its motto to ‘move fast and break stuff.’

The Georgetown examine finally highlights that universities, governments, tech corporations, and analysis funders want to take a position extra effort and cash in AI security.

Some have additionally referred to as for an worldwide physique for AI security, just like the Worldwide Atomic Vitality Company (IAEA), which was established after a sequence of nuclear incidents that made deep worldwide cooperation obligatory.

Will AI want its personal catastrophe to have interaction that degree of state and company cooperation? Let’s hope not.