Picture by Writer

Giant language fashions or LLMs have emerged as a driving catalyst in pure language processing. Their use-cases vary from chatbots and digital assistants to content material technology and translation providers. Nonetheless, they’ve change into one of many fastest-growing fields within the tech world – and we will discover them in all places.

As the necessity for extra highly effective language fashions grows, so does the necessity for efficient optimization strategies.

Nonetheless,many pure questions emerge:

Find out how to enhance their information?

Find out how to enhance their common efficiency?

Find out how to scale these fashions up?

The insightful presentation titled “A Survey of Techniques for Maximizing LLM Performance” by John Allard and Colin Jarvis from OpenAI DevDay tried to reply these questions. For those who missed the occasion, you possibly can catch the discuss on YouTube.

This presentation supplied a superb overview of assorted strategies and finest practices for enhancing the efficiency of your LLM functions. This text goals to summarize one of the best strategies to enhance each the efficiency and scalability of our AI-powered options.

Understanding the Fundamentals

LLMs are refined algorithms engineered to know, analyze, and produce coherent and contextually applicable textual content. They obtain this by way of in depth coaching on huge quantities of linguistic knowledge protecting numerous matters, dialects, and kinds. Thus, they will perceive how human-language works.

Nonetheless, when integrating these fashions in advanced functions, there are some key challenges to contemplate:

Key Challenges in Optimizing LLMs

- LLMs Accuracy: Making certain that LLMs output is correct and dependable data with out hallucinations.

- Useful resource Consumption: LLMs require substantial computational sources, together with GPU energy, reminiscence and large infrastructure.

- Latency: Actual-time functions demand low latency, which could be difficult given the scale and complexity of LLMs.

- Scalability: As person demand grows, making certain the mannequin can deal with elevated load with out degradation in efficiency is essential.

Methods for a Higher Efficiency

The primary query is about “How to improve their knowledge?”

Creating {a partially} purposeful LLM demo is comparatively simple, however refining it for manufacturing requires iterative enhancements. LLMs might need assistance with duties needing deep information of particular knowledge, methods, and processes, or exact conduct.

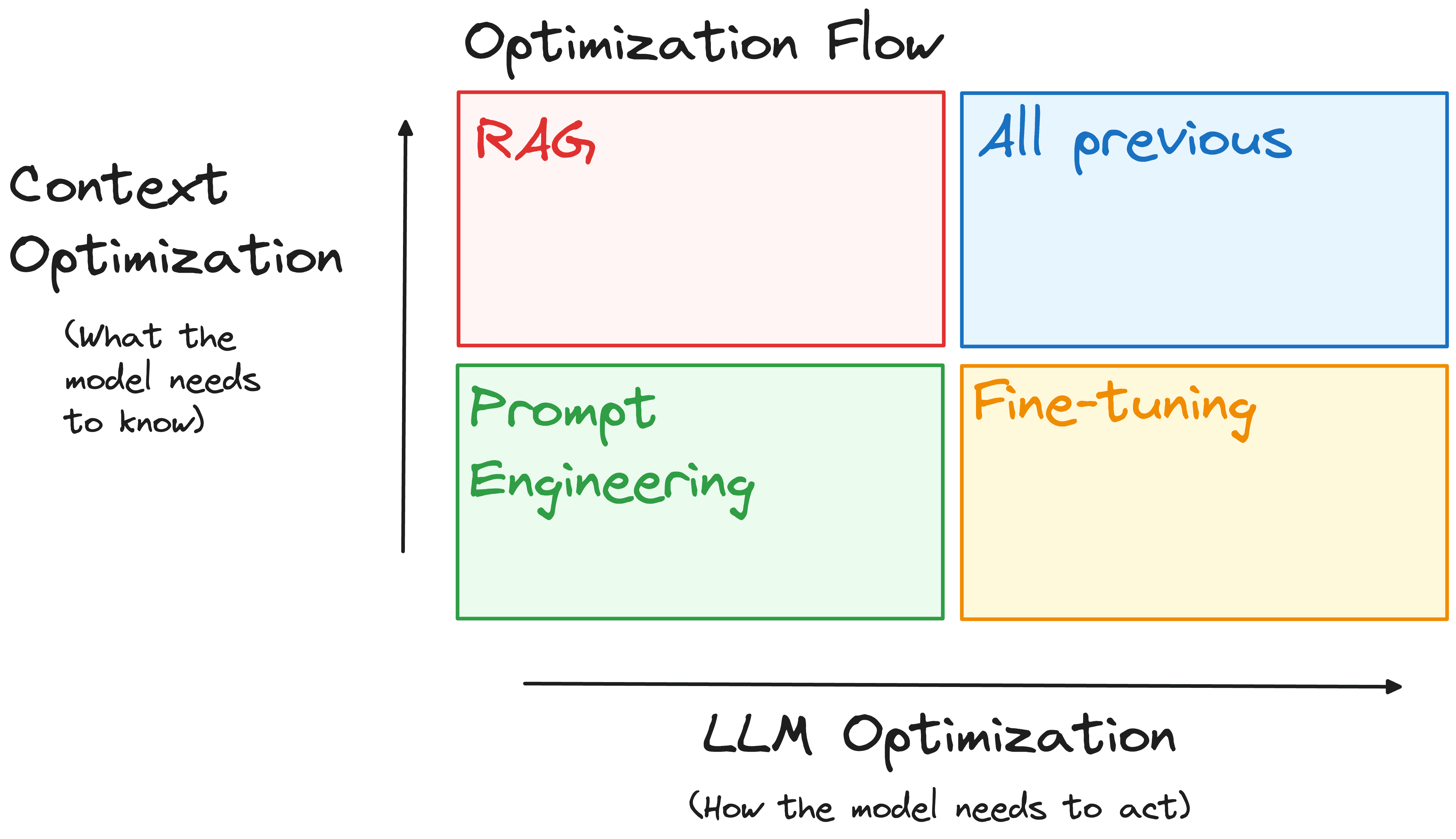

Groups use immediate engineering, retrieval augmentation, and fine-tuning to handle this. A typical mistake is to imagine that this course of is linear and ought to be adopted in a particular order. As an alternative, it’s simpler to strategy it alongside two axes, relying on the character of the problems:

- Context Optimization: Are the issues as a result of mannequin missing entry to the correct data or information?

- LLM Optimization: Is the mannequin failing to generate the right output, corresponding to being inaccurate or not adhering to a desired type or format?

Picture by Writer

To deal with these challenges, three major instruments could be employed, every serving a novel function within the optimization course of:

Immediate Engineering

Tailoring the prompts to information the mannequin’s responses. As an example, refining a customer support bot’s prompts to make sure it persistently offers useful and well mannered responses.

Retrieval-Augmented Era (RAG)

Enhancing the mannequin’s context understanding by way of exterior knowledge. For instance, integrating a medical chatbot with a database of the newest analysis papers to supply correct and up-to-date medical recommendation.

Tremendous-Tuning

Modifying the bottom mannequin to higher swimsuit particular duties. Similar to fine-tuning a authorized doc evaluation instrument utilizing a dataset of authorized texts to enhance its accuracy in summarizing authorized paperwork.

The method is extremely iterative, and never each method will work in your particular drawback. Nonetheless, many strategies are additive. If you discover a answer that works, you possibly can mix it with different efficiency enhancements to realize optimum outcomes.

Methods for an Optimized Efficiency

The second query is about “How to improve their general performance?”

After having an correct mannequin, a second regarding level is the Inference time. Inference is the method the place a skilled language mannequin, like GPT-3, generates responses to prompts or questions in real-world functions (like a chatbot).

It’s a crucial stage the place fashions are put to the check, producing predictions and responses in sensible situations. For giant LLMs like GPT-3, the computational calls for are huge, making optimization throughout inference important.

Contemplate a mannequin like GPT-3, which has 175 billion parameters, equal to 700GB of float32 knowledge. This measurement, coupled with activation necessities, necessitates important RAM. For this reason Operating GPT-3 with out optimization would require an in depth setup.

Some strategies can be utilized to scale back the quantity of sources required to execute such functions:

Mannequin Pruning

It includes trimming non-essential parameters, making certain solely the essential ones to efficiency stay. This may drastically scale back the mannequin’s measurement with out considerably compromising its accuracy.

Which suggests a big lower within the computational load whereas nonetheless having the identical accuracy. You’ll find easy-to-implement pruning code within the following GitHub.

Quantization

It’s a mannequin compression method that converts the weights of a LLM from high-precision variables to lower-precision ones. This implies we will scale back the 32-bit floating-point numbers to decrease precision codecs like 16-bit or 8-bit, that are extra memory-efficient. This may drastically scale back the reminiscence footprint and enhance inference pace.

LLMs could be simply loaded in a quantized method utilizing HuggingFace and bitsandbytes. This enables us to execute and fine-tune LLMs in lower-power sources.

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import bitsandbytes as bnb

# Quantize the mannequin utilizing bitsandbytes

quantized_model = bnb.nn.quantization.Quantize(

mannequin,

quantization_dtype=bnb.nn.quantization.quantization_dtype.int8

)

Distillation

It’s the course of of coaching a smaller mannequin (scholar) to imitate the efficiency of a bigger mannequin (additionally known as a trainer). This course of includes coaching the coed mannequin to imitate the trainer’s predictions, utilizing a mixture of the trainer’s output logits and the true labels. By doing so, we will a obtain related efficiency with a fraction of the useful resource requirement.

The concept is to switch the information of bigger fashions to smaller ones with easier structure. One of the crucial recognized examples is Distilbert.

This mannequin is the results of mimicking the efficiency of Bert. It’s a smaller model of BERT that retains 97% of its language understanding capabilities whereas being 60% sooner and 40% smaller in measurement.

Strategies for Scalability

The third query is about “How to scale these models up?”

This step is commonly essential. An operational system can behave very in another way when utilized by a handful of customers versus when it scales as much as accommodate intensive utilization. Listed here are some strategies to handle this problem:

Load-balancing

This strategy distributes incoming requests effectively, making certain optimum use of computational sources and dynamic response to demand fluctuations. As an example, to supply a widely-used service like ChatGPT throughout completely different international locations, it’s higher to deploy a number of cases of the identical mannequin.

Efficient load-balancing strategies embody:

Horizontal Scaling: Add extra mannequin cases to deal with elevated load. Use container orchestration platforms like Kubernetes to handle these cases throughout completely different nodes.

Vertical Scaling: Improve present machine sources, corresponding to CPU and reminiscence.

Sharding

Mannequin sharding distributes segments of a mannequin throughout a number of units or nodes, enabling parallel processing and considerably lowering latency. Absolutely Sharded Information Parallelism (FSDP) presents the important thing benefit of using a various array of {hardware}, corresponding to GPUs, TPUs, and different specialised units in a number of clusters.

This flexibility permits organizations and people to optimize their {hardware} sources in line with their particular wants and finances.

Caching

Implementing a caching mechanism reduces the load in your LLM by storing continuously accessed outcomes, which is very useful for functions with repetitive queries. Caching these frequent queries can considerably save computational sources by eliminating the necessity to repeatedly course of the identical requests over.

Moreover, batch processing can optimize useful resource utilization by grouping related duties.

Conclusion

For these constructing functions reliant on LLMs, the strategies mentioned listed here are essential for maximizing the potential of this transformative expertise. Mastering and successfully making use of methods to a extra correct output of our mannequin, optimize its efficiency, and permitting scaling up are important steps in evolving from a promising prototype to a strong, production-ready mannequin.

To totally perceive these strategies, I extremely suggest getting a deeper element and beginning to experiment with them in your LLM functions for optimum outcomes.

Josep Ferrer is an analytics engineer from Barcelona. He graduated in physics engineering and is presently working within the knowledge science area utilized to human mobility. He’s a part-time content material creator centered on knowledge science and expertise. Josep writes on all issues AI, protecting the appliance of the continuing explosion within the area.