AI deepfakes weren’t on the danger radar of organisations simply a short while in the past, however in 2024, they’re rising up the ranks. With AI deepfakes’ potential to trigger something from a share worth tumble to a lack of model belief by way of misinformation, they’re more likely to characteristic as a threat for a while.

Robert Huber, chief safety officer and head of analysis at cyber safety agency Tenable, argued in an interview with TechRepublic that AI deepfakes might be utilized by a variety of malicious actors. Whereas detection instruments are nonetheless maturing, APAC enterprises can put together by including deepfakes to their threat assessments and higher defending their very own content material.

Finally, extra safety for organisations is probably going when worldwide norms converge round AI. Huber referred to as on bigger tech platform gamers to step up with stronger and clearer identification of AI-generated content material, moderately than leaving this to non-expert particular person customers.

AI deepfakes are a rising threat for society and companies

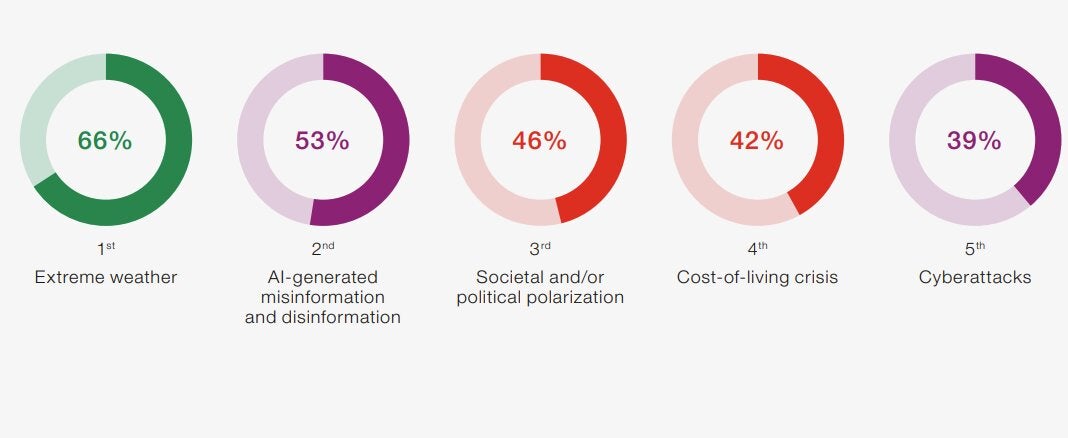

The chance of AI-generated misinformation and disinformation is rising as a world threat. In 2024, following the launch of a wave of generative AI instruments in 2023, the danger class as a complete was the second largest threat on the World Financial Discussion board’s International Dangers Report 2024 (Determine A).

Determine A

Over half (53%) of respondents, who had been from enterprise, academia, authorities and civil society, named AI-generated misinformation and disinformation, which incorporates deepfakes, as a threat. Misinformation was additionally named the most important threat issue over the subsequent two years (Determine B).

Determine B

Enterprises haven’t been so fast to contemplate AI deepfake threat. Aon’s International Threat Administration Survey, for instance, doesn’t point out it, although organisations are involved about enterprise interruption or harm to their model and repute, which might be attributable to AI.

Huber mentioned the danger of AI deepfakes continues to be emergent, and it’s morphing as change in AI occurs at a quick charge. Nevertheless, he mentioned that it’s a threat that APAC organisations ought to be factoring in. “This is not necessarily a cyber risk. It’s an enterprise risk,” he mentioned.

AI deepfakes present a brand new software for nearly any risk actor

AI deepfakes are anticipated to be another choice for any adversary or risk actor to make use of to realize their goals. Huber mentioned this might embrace nation states with geopolitical goals and activist teams with idealistic agendas, with motivations together with monetary achieve and affect.

“You will be running the full gamut here, from nation state groups to a group that’s environmentally aware to hackers who just want to monetise depfakes. I think it is another tool in the toolbox for any malicious actor,” Huber defined.

SEE: How generative AI might improve the worldwide risk from ransomware

The low price of deepfakes means low limitations to entry for malicious actors

The benefit of use of AI instruments and the low price of manufacturing AI materials imply there’s little standing in the best way of malicious actors wishing to make use of latest instruments. Huber mentioned one distinction from the previous is the extent of high quality now on the fingertips of risk actors.

“A few years ago, the [cost] barrier to entry was low, but the quality was also poor,” Huber mentioned. “Now the bar is still low, but [with generative AI] the quality is greatly improved. So for most people to identify a deepfake on their own with no additional cues, it is getting difficult to do.”

What are the dangers to organisations from AI deepfakes?

The dangers of AI deepfakes are “so emergent,” Huber mentioned, that they aren’t on APAC organisational threat evaluation agendas. Nevertheless, referencing the current state-sponsored cyber assault on Microsoft, which Microsoft itself reported, he invited folks to ask: What if it had been a deepfake?

“Whether it would be misinformation or influence, Microsoft is bidding for large contracts for their enterprise with different governments and reasons around the world. That would speak to the trustworthiness of an enterprise like Microsoft, or apply that to any large tech organisation.”

Lack of enterprise contracts

For-profit enterprises of any kind might be impacted by AI deepfake materials. For instance, the manufacturing of misinformation might trigger questions or lack of contracts world wide or provoke social issues or reactions to an organisation that would harm their prospects.

Bodily safety dangers

AI deepfakes might add a brand new dimension to the important thing threat of enterprise disruption. For example, AI-sourced misinformation might trigger a riot and even the notion of a riot, inflicting both hazard to bodily individuals or operations, or simply the notion of hazard.

Model and repute impacts

Forrester launched a listing of potential deepfake scams. These embrace dangers to an organisation’s repute and model or worker expertise and HR. One threat was amplification, the place AI deepfakes are used to unfold different AI deepfakes, reaching a broader viewers.

Monetary impacts

Monetary dangers embrace the power to make use of AI deepfakes to control inventory costs and the danger of monetary fraud. Just lately, a finance worker at a multinational agency in Hong Kong was tricked into paying criminals US $25 million (AUD $40 million) after they used a classy AI deepfake rip-off to pose because the agency’s chief monetary officer in a video convention name.

Particular person judgment is not any deepfake resolution for organisations

The massive drawback for APAC organisations is AI deepfake detection is troublesome for everybody. Whereas regulators and expertise platforms regulate to the expansion of AI, a lot of the duty is falling to particular person customers themselves to establish deepfakes, moderately than intermediaries.

This might see the beliefs of people and crowds impression organisations. People are being requested to resolve in real-time whether or not a dangerous story a few model or worker could also be true, or deepfaked, in an atmosphere that would embrace media and social media misinformation.

Particular person customers aren’t geared up to kind reality from fiction

Huber mentioned anticipating people to discern what’s an AI-generated deepfake and what’s not is “problematic.” At current, AI deepfakes might be troublesome to discern even for tech professionals, he argued, and people with little expertise figuring out AI deepfakes will wrestle.

“It’s like saying, ‘We’re going to train everybody to understand cyber security.’ Now, the ACSC (Australian Cyber Security Centre) puts out a lot of great guidance for cyber security, but who really reads that beyond the people who are actually in the cybersecurity space?” he requested.

Bias can also be an element. “If you’re viewing material important to you, you bring bias with you; you’re less likely to focus on the nuances of movements or gestures, or whether the image is 3D. You are not using those spidey senses and looking for anomalies if it’s content you’re interested in.”

Instruments for detecting AI deepfakes are taking part in catch-up

Tech corporations are shifting to offer instruments to satisfy the rise in AI deepfakes. For instance, Intel’s real-time FakeCatcher software is designed to establish deepfakes by assessing human beings in movies for blood movement utilizing video pixels, figuring out fakes utilizing “what makes us human.”

Huber mentioned the capabilities of instruments to detect and establish AI deepfakes are nonetheless rising. After canvassing some instruments out there in the marketplace, he mentioned that there was nothing he would suggest particularly for the time being as a result of “the space is moving too fast.”

What is going to assist organisations battle AI deepfake dangers?

The rise of AI deepfakes is more likely to result in a “cat and mouse” recreation between malicious actors producing deepfakes and people making an attempt to detect and thwart them, Huber mentioned. Because of this, the instruments and capabilities that support the detection of AI deepfakes are more likely to change quick, because the “arms race” creates a warfare for actuality.

There are some defences organisations could have at their disposal.

The formation of worldwide AI regulatory norms

Australia is one jurisdiction taking a look at regulating AI content material by way of measures like watermarking. As different jurisdictions world wide transfer in direction of consensus on governing AI, there’s more likely to be convergence about greatest follow approaches to assist higher identification of AI content material.

Huber mentioned that whereas this is essential, there are lessons of actors that won’t observe worldwide norms. “There has to be an implicit understanding there will still be people who are going to do this regardless of what regulations we put in place or how we try to minimise it.”

SEE: A abstract of the EU’s new guidelines governing synthetic intelligence

Massive tech platforms figuring out AI deepfakes

A key step can be for giant social media and tech platforms like Meta and Google to higher battle AI deepfake content material and extra clearly establish it for customers on their platforms. Taking up extra of this duty would imply that non-expert finish customers like organisations, workers and the general public have much less work to do in making an attempt to establish if one thing is a deepfake themselves.

Huber mentioned this may additionally help IT groups. Having giant expertise platforms figuring out AI deepfakes on the entrance foot and arming customers with extra data or instruments would take the duty away from organisations; there would must be much less IT funding required in paying for and managing deepfake detection instruments and the allocation of safety sources to handle it.

Including AI deepfakes to threat assessments

APAC organisations could quickly want to contemplate making the dangers related to AI deepfakes part of common threat evaluation procedures. For instance, Huber mentioned organisatinos could must be rather more proactive about controlling and defending the content material organisations produce each internally and externally, in addition to documenting these measures for third events.

“Most mature security companies do third party risk assessments of vendors. I’ve never seen any class of questions related to how they are protecting their digital content,” he mentioned. Huber expects that third-party threat assessments carried out by expertise corporations could quickly want to incorporate questions regarding the minimisation of dangers arising out of deepfakes.